Opus 4.7 Feels Like a Downgrade? Here's the Prompt That Fixes It

Opus 4.7 feels like a downgrade? Your skills are running on 4.6 assumptions.

I spent yesterday running a full migration audit of my Claude Code skill library: 45 skills, one CLAUDE.md, a couple of hooks. By evening I'd made 250 find-and-replace edits and reverted 150 of them.

The prompt is below, paste-ready. The 6 things it does that the official guide doesn't, plus the one mistake I made first, are why.

The pain is real

Three big Reddit threads in the last 48 hours:

- r/ClaudeCode, 1.7K upvotes and 744 comments: "Opus 4.7 is legendarily bad. I cannot believe this."

- r/ClaudeAI, hundreds of comments: "Claude Opus 4.7 is a serious regression, not an upgrade"

- r/singularity, 1.1K upvotes: "Claude Power Users Unanimously Agree That Opus 4.7 Is A Serious Regression"

On X, power users are piling on too. @CoderDotBlog: "Claude Opus 4.7 won't respect the structured output instructions, making it useless. Going back to 4.6." @adxtyahq: "ignores instructions it used to follow… tries to 'call it a day' mid-task." @Shpigford: "opus 4.7 is the first time I've thought 'Anthropic may be moving too fast'." @SeanOverbeeke: "way too literal instead of 'getting me'. I feel like dumber."

What they describe, in rough order of complaint volume:

- System prompts and structured-output rules getting silently ignored.

- Mid-task bail-outs, where 4.7 explains at length why it's not doing the thing instead of doing the thing.

- Token burn of 20-47% because the new tokenizer emits more tokens per equivalent content.

- Hallucinations worse than 4.6 on multi-step work, especially inside Claude Code.

- A trust gap in Anthropic's communication about the regression.

I hit versions of 1, 2 and 3 before I'd finished breakfast. That's why I sat down and ran the audit.

But the wins are real too. They're just quieter.

X amplifies rage. Reddit has room to consider. The positive case for 4.7 lives in the threads you have to scroll for:

- r/ClaudeCode: "Claude Opus 4.7 Changed How Thinking Works, and It's Actually Great" — "For interactive coding work? Summarized is the right default. Less noise, faster reading, same signal."

- r/ClaudeAI: "Here are my thoughts after 14h of full runs on Opus 4.7" — "I had a hard time solving tricky quant system bugs (Rust-Cython) with Opus 4.6 max and GPT-5.4 xhigh for three days in a row, but Opus 4.7 solved it in a 10h long running session."

- r/ClaudeCode: "This might be unpopular but Opus 4.7 is actually quite goood on Claude Code" — "After having abandoned Opus 4.6 1M after a terrible experience, I now happily enjoy Opus 4.7 1M for long context work."

- r/ClaudeCode comment: "Max Opus 4.7 has been a game changer for me in the positive direction. Leagues better than 4.6."

- r/ClaudeCode comment: "4.7 is significantly better at generating detailed plans… the plan it generates is nearly flawless to implement."

The pattern across the real positives: Max tier, xhigh effort, long-context (1M), hard debugging, plan generation, summarized thinking. The wins are specific and they're concentrated where you're giving the model room to think.

Which is the whole point of migrating. If your skills are still running on 4.6 defaults, if your effort is still on high or medium, if your system prompts still assume 4.6 will infer what you meant, you're eating the regression without ever touching the upgrades. Fix the skills and you get both sides.

What actually changed in 4.7

The official migration guide lists the behavioral shifts, but the framing is gentle. The shifts that matter for anyone running a skill library or non-trivial system prompt:

- More literal instruction-following. Vague or polite-but-ambiguous rules get executed exactly as written. The specificity you relied on 4.6 to infer no longer gets inferred.

- Fewer subagents and fewer tool calls by default. Skills that used to fan out implicitly now need explicit triggers.

- Stricter effort calibration.

lowandmediumscope tighter than on 4.6. For coding or agentic work, start atxhigh. - Response length adapts to perceived complexity. If your output shape depended on 4.6 defaults, calibrate explicitly.

- More direct tone, less validation-forward phrasing. Voice-dependent content that leaned on 4.6 warmth needs a pass.

- New tokenizer emits 20-47% more tokens. Budget headroom and watch your rate limits.

The first one is the big one. It's also where I over-corrected.

What this prompt adds over the official migration guide

Never X — because Ystays negative. Bulk-flipping to positives drops the failure mode; 4.7 needs the landmine spelled out. I learned this one the hard way: I read the guide's line about "prefer positive examples", spawned 20 subagents, flipped 250 phrases, and had to revert 150 by hand. One of them was my invoicing rule — "Never use the API to update items that need bilingual names, it silently wipes the English field" — which I'd softened to "Always use the web UI for bilingual names." Same rule on paper. In practice, the positive version reads as a preference and 4.7 takes the API path anyway. TheNeverwas the load-bearing piece.- Remove "report progress every N steps" scaffolding. 4.7 does this natively; old scaffolding fights the built-in behavior.

- "Consider checking X" → "if Y, run tool Z". 4.7 spawns fewer subagents / tool calls by default. Explicit triggers fire reliably; soft ones are inconsistent.

- Replace "as appropriate" / "when relevant" / "as needed" with the actual condition. 4.6 inferred specifics. 4.7 executes literally — vague words lose their teeth.

- Don't fork marketplace/upstream skills. Pull their 4.7 updates instead. Hand-patching creates a fork you now own. Active upstream usually ships faster than you'd maintain a fork alone.

- Raise effort to

xhighfor coding / agentic work.lowandmediumscope tighter on 4.7. Lowest-effort lever — try it before touching a single skill.

Here's the prompt. Paste it into a fresh Claude Code session and run the phases in order.

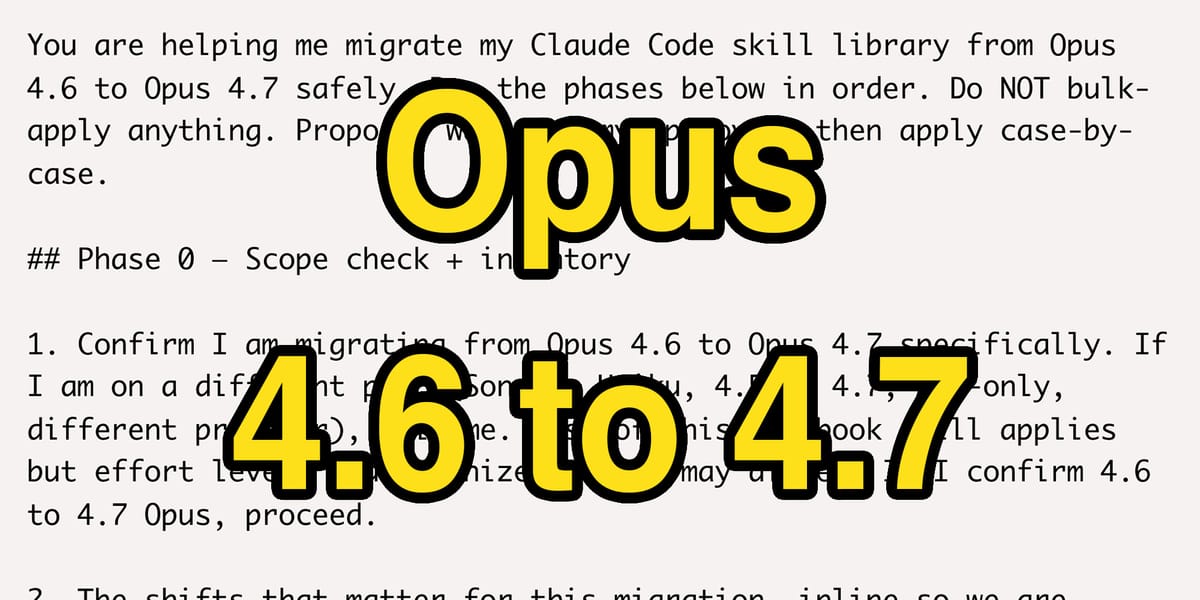

You are helping me migrate my Claude Code skill library from Opus 4.6 to Opus 4.7 safely. Run the phases below in order. Do NOT bulk-apply anything. Propose, wait for my approval, then apply case-by-case.

## Phase 0 — Scope check + inventory

1. Confirm I am migrating from Opus 4.6 to Opus 4.7 specifically. If I am on a different pair (Sonnet, Haiku, 4.5 to 4.7, API-only, different provider), tell me. Most of this playbook still applies but effort levels and tokenizer deltas may differ. If I confirm 4.6 to 4.7 Opus, proceed.

2. The shifts that matter for this migration, inline so we are calibrated:

- More literal instruction-following. Vague or polite-but-ambiguous rules get executed as written.

- Fewer subagents and fewer tool calls by default. Explicit triggers now required.

- Stricter effort calibration. low and medium scope tighter than on 4.6.

- Response length adapts to perceived complexity.

- More direct tone, less validation-forward phrasing.

- New tokenizer emits 20-47% more tokens.

Full migration guide for reference: https://platform.claude.com/docs/en/about-claude/models/migration-guide

3. Inventory. List every skill in `.claude/skills/` and `~/.claude/skills/` (skip if empty). Read `CLAUDE.md` if it exists.

4. For each skill, classify OWNED vs UPSTREAM:

- UPSTREAM signals: name has a plugin prefix (`<plugin>:<skill>`), frontmatter has `metadata:`, `version:`, `source:`, `upstream:`, or `origin:` fields, path is under a plugin cache, or git history shows only a single import commit. Phases 1-3 SKIP these.

- OWNED: everything else, user-authored skills.

- AMBIGUOUS: ask me. Do not guess.

5. Confirm what effort level I am running. If low or medium for coding or agentic work, recommend xhigh.

6. Report: scope confirmed (4.6 to 4.7 Opus), OWNED count, UPSTREAM count, CLAUDE.md size if present. Wait for me to say "proceed" before Phase 1.

For UPSTREAM skills, the narrow treatment: if the source is known, fetch the latest upstream version and diff. If upstream has shipped a 4.7 update, recommend I pull it rather than hand-edit. If I have locally customized an upstream skill, flag the fork risk and wait for my call.

## Phase 1 — Fleet audit (no edits)

For EVERY OWNED skill (not a sample, not a top-N, all of them), score on four dimensions:

- Trigger specificity (0-3): does the `description` list concrete trigger phrases a literal-reading 4.7 will match on? 3 = excellent; 0 = vague "use when working with X".

- Size (0-3): 3 = under 100 lines; 2 = 100-300; 1 = 300-500; 0 = over 500.

- Negative-framing count: count occurrences of never, don't, do not, avoid, NEVER. Just count. Do NOT flag for flipping. Many negatives are load-bearing.

- Redundant scaffolding: flag skills that tell the model to "summarize every N steps", "report progress after each phase", or similar. 4.7 does this natively.

Output a ranked table with every OWNED skill, worst scores first. Include a column for "review depth": FULL (scored poorly on any dimension), LIGHT (healthy across the board).

## Phase 2 — Per-skill triage (propose, don't apply)

Review EVERY OWNED skill. Full review on skills marked FULL in Phase 1. Light pass on the rest — just confirm no hidden issues by reading the full SKILL.md.

For each skill, propose:

- Redundant scaffolding to remove. Quote specific lines that duplicate what 4.7 handles natively.

- Literal-trigger risk. Does the skill have polite-but-ambiguous rules ("handle appropriately", "be mindful of", "as needed", "when relevant")? 4.7 will not infer the specifics. Propose concrete rewrites with explicit conditions.

- Negatives worth positive-reframing. The ONLY candidates are vague "avoid X" or "don't Y" with no concrete failure mode attached. Any NEVER <specific-landmine> or Don't X (causes Y) STAYS negative. Flag those explicitly as "KEEP NEGATIVE — load-bearing" with the failure mode quoted.

- Subagent/tool-call triggers. If the skill used to rely on Claude spontaneously spawning subagents or calling tools, make them explicit. Rewrite "consider checking X" as "if Y condition, run tool Z" with the concrete trigger.

Present findings per skill in this shape:

```

## <skill-name>

- REMOVE (redundant scaffolding): "<quote>" — reason

- REWRITE (literal-trigger risk): "<quote>" → "<proposed concrete rewrite>"

- KEEP NEGATIVE (load-bearing): "<quote>" — failure mode: <what breaks>

- MAKE EXPLICIT (subagent/tool trigger): "<quote>" → "<explicit condition + action>"

```

If a skill has no changes worth proposing, say so and move on. Do not invent work.

Wait for my explicit approval per item before applying.

## Phase 3 — Apply approved edits, case-by-case

Only edit what I have approved. For each approved edit:

1. Use the Edit tool with replace_all=false, one change at a time.

2. After each edit, confirm the file still parses (frontmatter intact, markdown valid).

3. If you rewrite a negative as positive, INCLUDE the original failure mode. Example: "Use the web UI for bilingual names" becomes "Use the web UI for bilingual names, the API silently wipes the English field". Positive verb plus explicit consequence is better than either polarity alone.

## Phase 4 — Static verification (no side effects)

Do NOT execute any edited skill against real data. Skills that write to Gmail, calendars, billing, health files, CRMs, or any external state will produce unwanted artifacts if run as a test. Verification here is static only:

For each edited skill, re-read the full SKILL.md top to bottom and check:

- Does the skill still parse cleanly (frontmatter intact, markdown valid, no broken links)?

- Does each edit preserve the failure mode it was attached to? If a negative became positive, is the consequence still in the sentence?

- Are there contradictions between the edits and surrounding rules that were not touched?

- Do cross-references to other skills still resolve?

Report per skill: "static checks pass" or flag specific issues.

When I am ready, I will run the edited skills in my own workflow on tasks I would run anyway. Do not initiate live tests.

If I use git, commit per skill so a revert is cheap. If I do not, back up the file before each edit.

## Anti-patterns (from my own migration)

- Bulk-flipping every Never X to Always Y across the fleet in one pass. Silent regressions where load-bearing negatives lose their specificity.

- Treating a one-line remark in the migration guide as a headline mandate and applying it hundreds of times.

- Running all phases in one shot without stopping between them. No clean rollback points.

- Keeping "report progress after each step" scaffolding. Now fights 4.7's native progress updates.

- Leaving polite-but-ambiguous phrasing intact ("as appropriate", "when relevant"). 4.7 will not fill in the blanks 4.6 filled in.

## If something breaks after migration

- Revert the most recent skill-level change first, not the whole migration.

- If a load-bearing negative got flipped to positive, put the negative back verbatim. The rule needs its failure mode to stay enforceable.

## One meta-rule worth adding to your CLAUDE.md or system prompt

> Write for literal readers. Claude executes instructions exactly as written on 4.7. Prefer positive imperatives with explicit objects. Keep load-bearing negatives negative; the specific failure mode is what makes the rule enforceable. Replace vague adverbs ("usually", "typically", "as appropriate") with the actual condition.

Start with Phase 0 and report back before touching anything.

The point

The real 4.7 migration is not "flip your prohibitions." It is: stop overreading general advice as specific mandate. Claude follows instructions more literally now, which means the instructions need to keep the specificity they always had. A one-line remark in a migration guide is a one-line remark, not a headline rule; weight your edits by how much the source actually emphasizes them.

Your 4.6 skills were carrying weight you did not notice. The upgrade exposes where that weight was, and only some of it needs to change. The tidier your prompts look after migration, the more likely you softened something that was doing real work.

The wins are on the other side. They're specific — xhigh effort, long-context sessions, plan generation, hard debugging — and they show up once your skills stop asking 4.7 to behave like 4.6. The rage is loud because people are feeling the regression without accessing the upgrade. Migrate the skills, turn the effort up, and you cross over.

Want more ideas, insights, or practical guides? Subscribe here for more tips and tricks: